Strategic research agenda in robotics (“Robotic Visions to 2020 and beyond”, EUROP – CARE, 07/2009) predicts that, in future, robot co-workers will assist and cooperate closer with humans under many different circumstances. As consequence and necessity, future technologies should take in account improvements of interaction between humans and robots, independently of place (at work, in public, at home, on the moving), and both in tele-operated or individual task mode. The future vision of robotic co-workers includes robot assistant in industrial environments, in security, for physical challenged, rehabilitation robot, personal robot, ...

The ASTROMOBILE proposal wants to revolve around all these themes and focus on the development and deployment of a smart robotic assistive platform, with particular attention on the problem of navigation and interaction. In particular the objective of the proposal is to demonstrate that a smart robotic mobile platform, with an embodied bidirectional interface with the world and the users, can be conceived to improve services useful to the well-being of humans or equipment.

In this proposal, this objective is pursued by adapting and integrating a robotic interactive platform, made by a mobile robot, in intelligent environments in order to make it an adaptable modular device able to carry out several tasks. The main functionalities of the system are related to communication, reminder functions, monitoring and safety.

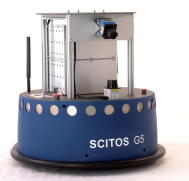

The robot chosen as an available platform to demonstrate the basic thesis of the project is the mobile platform SCITOS-G5 from Metralab. The robotic platform will be developed combining technological theories and competences of Robotics, Information and Communication Technologies (ICT) and Ambient Assisted Living (AAL).

Two types of research lines will be considered: one related to navigation in unstructured environment, using sensors on the robot and a smart pervasive sensor network in the environment; and another on the interaction with user, using multimodal interfaces.

In the first research line, the robot will be able to autonomously move everywhere in its workspace using its sensor for localization (odometer, inertial, laser, infrared, ultrasound). It will also use a pervasive sensor network (RSSI signal of a ZigBee sensor network) to improve the localization capabilities (indoor GPS). Further, interaction between robot and environment by means of the pervasive sensor network will led to a sort of awareness, for which the robot will exactly know the position of user or object to reach in the workspace.

In the second research line, the usability of the robot and its interaction with user will be conceived and designed, using natural strategies of humans. For this reason the assistive mobile robot will be controlled by end users through a multi modal interface that combine audio, video and touch information. In particular this interaction will be based on visual and speech recognition. The robot will be able not only to process video-tactile information obtained by the touch screen embedded on the robot but also to recognize the users’ language, to identify commands and to associate them to tasks desired by users. At the end of the project, the system assessment will be also executed with samples of potential end-users in real scenarios of use to test the usability and the acceptability of the proposed system.

The expected impact is on the user’s side as well as on the industrial side. On the user’s side, the project is intended to demonstrate and assess how much the well-being of humans can be increased with the proposed robotic solution. On the industrial side, the project can help identify the market for service robotics and favour the competitiveness of Europe in this field.